In an attempt to ‘redefine’ the ‘often testy’ relationship between online publishers and search engines, Microsoft plans to work with European media owners to protect and profit from copyrighted material online, IHT reports.

Tag Archives: search engines

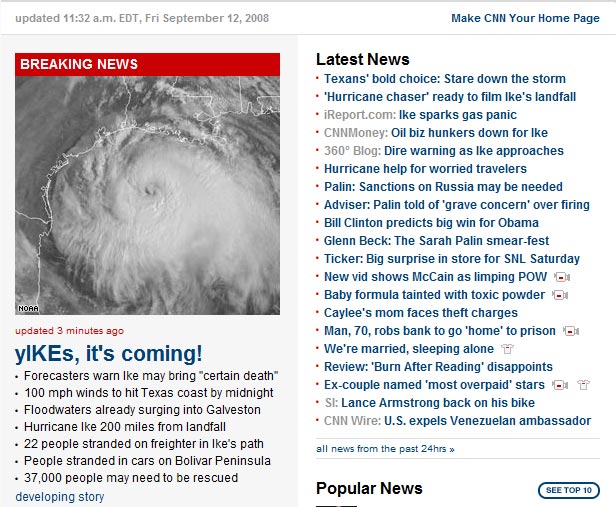

CNN mangles Hurricane Ike headline

Over on Wired Journalists, Rafael Sangiovanni, web producer for the Miami Herald, was quick enough to grab and post a shot of CNN’s questionable headline as Hurricane Ike hit the US.

Hit or miss?

(It’s certainly not going to be a hit for search engines and it looks pretty awkward in my opinion)

1xBet promo codes provide players with rewards for entering a unique code while depositing or registering with 1xBet. These are available for casino games and sports betting, letting users claim deposit bonuses, free spins, and bonus cash. Players can also find 1xbet promo code exclusive to their country from this review. Once-off codes can also be purchased from the 1xBet betting site for bonus points that are awarded whenever a player places a bet. There are also loads of bonus offers that feature a vast range of rewards, including cashback bonuses, bonus winnings for sports and casino markets.

Editor&Publisher: Tribune had warned Google to stop crawling newspaper sites before United Airlines story

The resurfacing of a six-year-old Tribune Co article, which caused a severe drop in share prices for United Airlines, is being blamed squarely on search engines by the publisher.

Tribune has said it asked Google to stop crawling its newspaper websites ‘months ago’.

The story came to light after a single user accessed the story on the Florida Sun Sentinel’s website at a period of low traffic, the publisher claimed.

This single user was enough to push the story to the top of the paper’s most viewed articles, where it subsequently came to the attention of Google’s crawlers.

Getting links with made-up content: clever marketing or unethical publishing?

A story on financial website Money.co.uk of a teenager stealing his dad’s credit card to pay for prostitutes ticked all the right boxes to be a search engine success.

And for those of you who haven’t come across it yet, it is too good to be true.

It’s made up, fictitious and fabricated to generate what the man behind it – Lyndon Antcliff – calls linkbait.

According to a blog post by Antcliff, the piece, which carried no byline and wasn’t on the news wires, attracted 14,000 links, in addition to being picked up by various other news publishers.

As he says in the post he’s not himself debating the ethics of such practice, but will ‘leave that to others who have a lot more time on their hands’, which is where this post steps in.

Yes – sites should optimise headlines for search engines and try to ensure the story keeps up with what the headline promises. But making content up is dangerous for a publisher’s reputation and unethical.

As Antcliff points out, it’s alarming that other media did not check the facts of the article before republishing it and the spread of the story proves he knows what he’s doing when it comes to optimising content.

It’s just a shame the same trial couldn’t have been carried out on a real piece of content.

Despite Antcliff saying he ‘pushed the boundaries of the ridiculous to make it obvious that the story wasn’t true’ it is still available on the website and until recently carried no label of it being hoax.

It now includes the note: “This story is a parody and is not intended to be taken seriously”, which doesn’t help explain things much, just makes the reader wonder why they’re publishing it.

Other media aside, doesn’t running content purely for linkbaiting purposes undermine Money.co.uk’s credibility to a worryingly low level?

BusinessWeek.com revises 2005 article on blogs because of ‘longtail’ traffic

BusinessWeek.com has looked to data from its web traffic to update a story originally published in 2005 (pointed out by The Bivings Report).

After seeing that their article ‘Blogs Will Change Your Business‘ was continuing to attract significant traffic, authors Stephen Baker and Heather Green decided the demand for the information meant an updated version was necessary.

“Type in ‘blogs business’ on the search engine, and our story comes up first among the results, as of this writing. Hundreds of thousands of people are still searching ‘blogs business’ because they’re eager to learn the latest news about an industry that’s changing at warp speed. Their attention maintains our outdated relic at the top of the list. It’s self-perpetuating: They want new, we give them old,” wrote Baker and Green.

The article has not only been given the new headline ‘Social Media Will Change Your Business‘, but now features annotations and updates from experts.

An editor’s note at the top of the revised piece openly explains this strategy (emphasis is mine):

“When we published ‘Blogs Will Change Your Business’ in May, 2005, Twittering was an activity dominated by small birds. Truth is, we didn’t see MySpace coming. Facebook was still an Ivy League sensation. Despite the onrush of technology, however, thousands of visitors are still downloading the original cover story.

“So we decided to update it. Over the past month, we’ve been calling many of the original sources and asking the Blogspotting community to help revise the 2005 report. We’ve placed fixes and updates into more than 20 notes; to view them, click on the blue icons. If you see more details to fix, please leave comments. The role of blogs in business is clearly an ongoing story.

“First, the headline. Blogs were the heart of the story in 2005. But they’re just one of the tools millions can use today to lift their voices in electronic communities and create their own media. Social networks like Facebook and MySpace, video sites like YouTube, mini blog engines like Twitter-they’ve all emerged in the last three years, and all are nourished by users. Social Media: It’s clunkier language than blogs, but we’re not putting it on the cover anyway. We’re just fixing it.”

The original version still exists on the site, but directs readers to the updated piece. The writers have also been using their blog on the site to gain feedback from readers on what should be changed.

So that’s re-optimising the article for search engines, meeting the demands of readers and promoting the site as an up-to-the minute information source, all rolled into one.

Thomson Reuters debuts Calais Tagaroo

Thomson Reuters has launched Calais Tagaroo – an application for WordPress, which allows bloggers to semantically tag their content.

The plugin app automatically generates tags for people, places, facts and events, according to a release from the company, as well as finding relevant photos from Flickr to accompany posts.

Bloggers can add their own metatags too and set filters for image searches on Flickr. More tag categories will be added as the application is developed.

The plugin, which is freely available as part of the Open Calais project, is aimed at optimising blog posts for search engines.

UK national newspapers neglecting sitemaps for better search indexing

UK newspaper websites are not implementing standard protocols supported by search engines such as Yahoo and Google.

According to blogger and internet consultant Martin Belam, only two of the UK’s national newspapers use sitemap.xml

– a feature which lists all pages a given site wants to be indexed by a search engine.

And the winners are: The Daily Mail, which has sitemaps for individiual sections of the website; and The Scotsman, which has one central sitemap for all pages.

As Journalism.co.uk reported last month, TimesOnline and The Independent are the only UK national titles to support the ACAP protocol. They’ve made their choice – unsupported by the search giants – and so have the Mail and Scotsman, but what are the other paper’s doing to improve indexing of their content?

Newmediabytes: How to write web headlines that catch search engine spiders

Newmediabytes has some good pointers for those journalists and subs looking to get their online headlines perfectly attuned for the search engines to home in on.

Main points (click through for detailed definitions)

– Be clear and concise

– Plan headlines for searchers

– Include appropriate keywords and keyword phrases

– Include FULL NAMES of people and places where applicable

– Include DATELINES

– Keep headlines under 65 characters

The site also has a video of DetNews.com web editor Leslie Rotan talking about some things to remember when writing headlines for the web.

Good Stuff.

OJR: Using Google Trends to fine-tune your news website

Google’s tool can help online publishers tweak their content to maximize traffic from search engine users, says OJR.

‘Google Trends allows you to select up to five words or phrases, then shows you how those search terms rate relative to one another in both the volume of search queries handled by Google, as well as news references tracked by the search engine. It’s an addictive site for a data geek, like me, and essential for any online publisher who wants to optimize his or her publication to attract more visitors from search engines, such as Google.’

Eric Schmidt – Google resistance to ACAP based on technology

Google CEO Eric Schmidt has denied that Google’s resistance to using ACAP is based on ‘wanting to control’ publishers information, insisting that it is strictly a technology issues.

Speaking to iTWire, Schmidt said: “ACAP is a standard proposed by a set of people who are trying to solve the problem [of communicating content access permissions]. We have some people working with them to see if the proposal can be modified to work in the way our search engines work. At present it does not fit with the way our systems operate.”

According to iTWire, Schmidt went on to deny that Google’s reluctance so far to use the rights and permissions technology was because Google wanted as few barriers as possible between online content and its search engines. “It is not that we don’t want them to be able to control their information.”

Schmidt made his comments after a tit-for-tat exchange last week in which Gavin O’Reilly, chairman of World Association of Newspaper and ACAP CEO, reacted strongly to claims made by a senior Google executive that the search engine believed ACAP was an unnecessary system and that its function could be fulfilled by existing web standards.